Voice AI Evaluation Infrastructure: A Developer's Guide to Testing Voice Agents Before They Hit Production

Most voice AI teams ship without evaluation infrastructure. Here is a practical architecture for building one that catches failures before your users do.

Voice AI agents are shipping to production faster than teams can test them. The demo works, leadership signs off, and suddenly your voice agent is handling 10,000 calls a day with no evaluation infrastructure behind it.

The core problem is that a voice AI pipeline is not a single model. It is a chain of systems: speech-to-text (STT), a large language model (LLM), and text-to-speech (TTS), all running in sequence. Each one can fail independently. A transcript can be perfect while the audio output sounds robotic. Latency might hold at 200ms in staging and spike to 900ms under real traffic. The LLM might produce a flawless response, but the TTS engine mispronounces your product name.

Testing voice AI is fundamentally different from testing a chatbot or an API endpoint. You are dealing with audio signals, real-time latency constraints, accent variability, background noise, and emotional tone, all at once. Most teams skip building proper evaluation systems because the problem feels overwhelming.

This guide covers how to build voice AI evaluation infrastructure that catches failures before users do: the architecture, the metrics, the tools, and the traps teams fall into.

Why Most Teams Skip Voice AI Evaluation

The cost-benefit trap. Evaluation infrastructure does not ship features or close deals. When a voice agent demo goes well, the pressure is to move to production immediately. Engineering teams are told to add testing “later,” but later never arrives. What teams miss is that reactive firefighting eats 30 to 50% of engineering time once the agent is live. You end up spending more time debugging production incidents than you would have spent building the testing pipeline.

Technical complexity. Voice evaluation is harder than text evaluation. With a chatbot, you can run string comparison and keyword matching. With voice AI, you need to evaluate audio quality, transcription accuracy, per-turn response latency, tone consistency, and conversation flow, each requiring different measurement approaches. Most teams come from text-based AI backgrounds, try to apply chatbot testing patterns to voice agents, and quickly realize those patterns fall short.

No standard frameworks. Until recently, there was no widely adopted voice AI testing framework. Teams faced three options: build custom (a 6 to 12 month investment), use text-based tools that miss voice-specific failures, or skip evaluation entirely. Too many chose option three. Purpose-built platforms like Future AGI have changed this by offering production-grade evaluation across audio, text, and multimodal pipelines.

No clear ownership. Voice agent evaluation sits between teams. Engineering builds the pipeline. QA handles traditional testing but lacks audio evaluation expertise. Data science reads model performance numbers, but voice-level concerns like tone and pronunciation fall outside their scope. Without a clear owner, evaluation becomes nobody’s responsibility.

The Five Layers of Voice AI Evaluation

A production-grade voice agent evaluation stack has five distinct layers. Each one catches a different class of failure.

1. Audio Pipeline Testing. Before you evaluate what your agent says, confirm that the audio pipeline itself works. This layer validates signal-to-noise ratio (SNR), codec artifact detection, echo cancellation behavior, and sample rate consistency. Once your audio pipeline corrupts the signal through distortion or packet loss, every downstream system suffers. Future AGI’s audio-native evaluation stack covers this layer directly, running quality checks on the raw waveform rather than relying on transcript analysis alone.

2. Speech Recognition Accuracy. Your STT engine converts audio to text, and errors here cascade through the entire pipeline. When STT turns “I want to cancel” into “I want to counsel,” the LLM has no idea the transcript is wrong. It takes that input and produces a completely irrelevant response. Measure word error rate (WER) and sentence error rate (SER), then segment results by accent group, background noise level, and domain-specific vocabulary. Build a dedicated ASR evaluation pipeline and test with audio that reflects how your users actually sound, not just clean recordings.

3. LLM Response Quality. A clean transcript is only half the problem. The LLM still needs to produce a response that is factually correct, contextually relevant, complete enough to be useful, and compliant with your business logic. Key evaluation criteria include task completion accuracy, hallucination detection, policy compliance, and escalation behavior. A common failure: the LLM gives a technically correct answer that does not actually solve the user’s problem.

4. Text-to-Speech Quality. The TTS engine converts the LLM response back to audio, and this is where many hidden failures live. A response that reads perfectly as text might sound unnatural, carry incorrect emphasis, or mispronounce key terms when spoken aloud. Evaluation here covers naturalness scoring, prosody analysis (rhythm, stress, intonation), pronunciation accuracy for brand and technical terms, and emotional tone matching. If a frustrated customer gets a cheerful response, that is a failure transcripts will never catch.

5. End-to-End Conversation Flow. Individual components can all pass their tests while the overall conversation still fails. This layer covers multi-turn coherence, context retention, turn-taking behavior, and full task resolution. A password reset flow might require five turns. Each turn might work individually, but if the agent loses context between turns three and four, the interaction breaks.

Metrics Framework

You need metrics across four categories to get a complete picture.

Infrastructure metrics cover end-to-end latency (target under 300ms for conversational use), audio packet loss rate (under 1%), STT processing time (under 100ms), TTS generation time (under 150ms), system uptime (99.9%+), and concurrent call capacity.

Model performance metrics measure how well each AI component performs its core job. For STT, track WER segmented by accent, noise level, and domain vocabulary. For the LLM, track task completion rate, hallucination frequency, and response relevance. For TTS, track mean opinion score (MOS) for naturalness and pronunciation error rate.

User experience metrics include first-call resolution rate, conversation abandonment rate, average handle time, user satisfaction (via post-call surveys or sentiment analysis), and escalation rate to human agents. A 5% WER might be acceptable for general conversation but catastrophic for banking where account numbers are spoken aloud.

Business impact metrics connect technical performance to outcomes: cost per resolved interaction, containment rate, revenue impact for sales use cases, and customer retention. Without these, every engineering investment decision becomes a gut call.

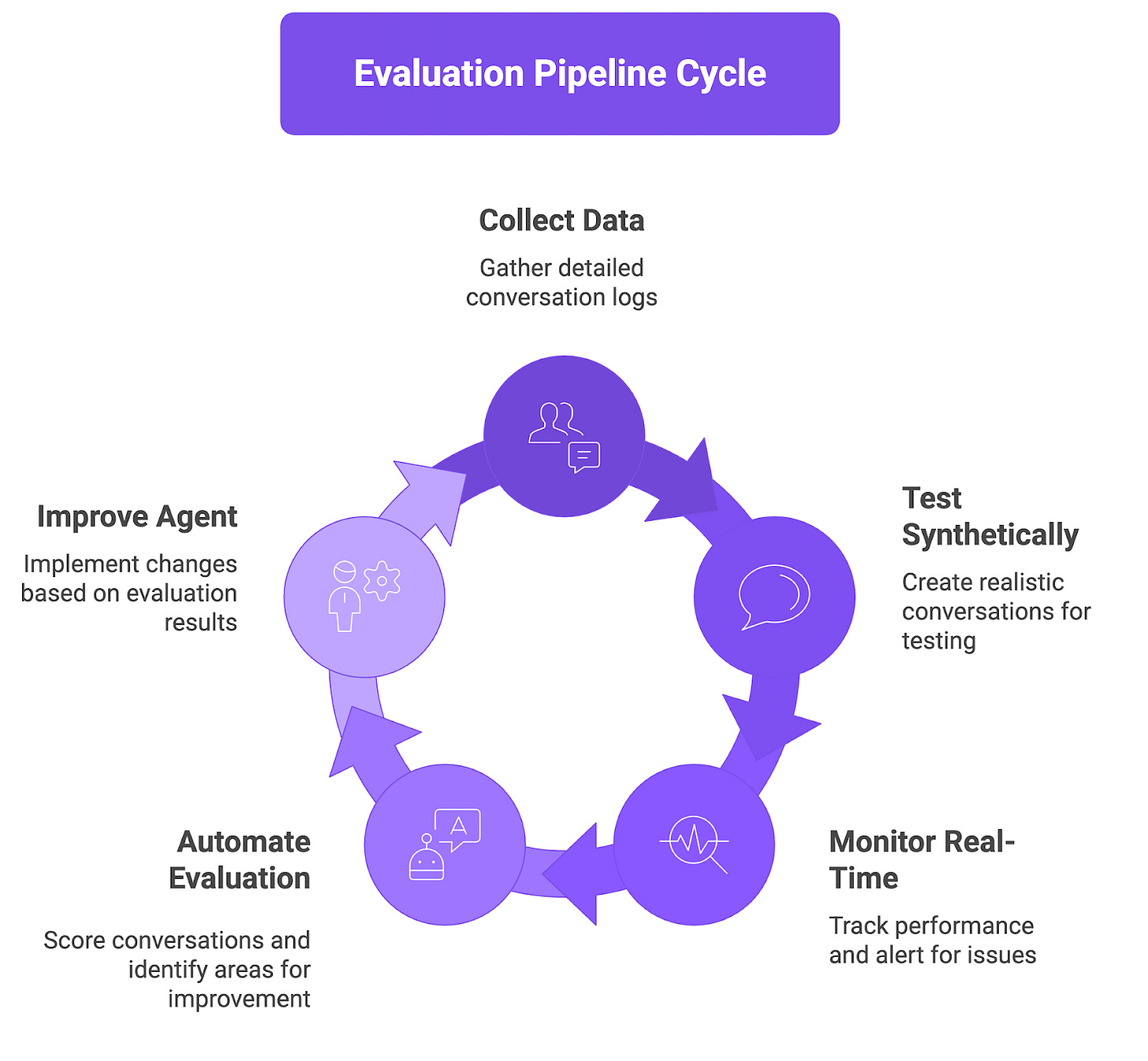

Building the Evaluation Pipeline

Data collection. Capture every conversation with enough detail to reconstruct and evaluate it. Log full audio recordings (both channels), timestamped turn-level transcripts, LLM input/output pairs with prompt metadata, TTS audio output, and session metadata (device, network conditions, locale). Store raw audio alongside transcripts. Transcript-only logging misses audio quality issues, tone problems, and latency spikes visible only in the waveform.

Synthetic testing. You cannot wait for real users to find your bugs. Build test scenarios across four categories: happy path (the 20 most common intents handled correctly), edge cases (unusual requests, ambiguous inputs, out-of-scope questions), adversarial inputs (background noise, accent variation, overlapping speech, fast speech), and regression scenarios (every previously found bug converted into an automated test). Future AGI’s Simulate product generates thousands of realistic voice conversations across diverse personas, accents, and styles, evaluating the actual audio output rather than just the transcript.

Real-time monitoring. Production monitoring operates at two speeds. Real-time alerting tracks per-turn latency, STT confidence scores, conversation abandonment events, and per-component error rates. Batch analysis (daily or weekly) surfaces quality trends, slow degradation patterns, emerging edge cases, and model version comparisons. Together, these form your voice AI observability stack.

Evaluation automation. Build a pipeline that pulls conversation logs, scores them against your metrics framework, flags anything below your quality bar, generates actionable reports, and routes failed conversations back into your test suite.

How Future AGI Fits In

Future AGI is a platform built for engineering and optimizing AI agents across text, image, and audio. What makes it relevant for voice evaluation is its audio-native capability: proprietary Turing models run directly on the audio waveform to evaluate tone, timing, naturalness, and quality, catching problems that transcript-only tools miss entirely.

The workflow is straightforward. Use Simulate to generate realistic test conversations at scale against your agent. Connect your existing voice infrastructure (Vapi, Retell, LiveKit). Define evaluation criteria using built-in templates for latency, audio quality, and tone consistency, then add custom evals for your specific requirements. Run thousands of concurrent tests with varied accents, personas, and noise levels, all evaluated at the audio level.

The Observe module provides real-time production monitoring with trace-level detail. Pick any conversation, replay it, and trace problems to the exact pipeline stage (STT, LLM, or TTS). Evaluation data feeds directly into optimization workflows, creating a closed loop where every production conversation improves agent quality.

Future AGI integrates with LangChain, Langfuse, OpenTelemetry, Salesforce, AWS Bedrock, and audio providers including Deepgram, ElevenLabs, and PlayHT. The open-source TraceAI SDK gives teams full instrumentation control without vendor lock-in.

Common Pitfalls

Ignoring latency until production. Teams optimize for accuracy during development and discover latency issues only under real traffic. Set target thresholds per component from day one and treat violations as test failures. Monitor p50, p95, and p99 latency distributions, not just averages.

Over-optimizing accuracy at the expense of speed. Chasing the highest WER scores with the largest models often produces a system too slow for real-time conversation. Always evaluate the exact stack you plan to ship.

Insufficient edge case coverage. Happy path tests look great. Production traffic includes accents, noise, interruptions, and unexpected requests never tested. Pull failed conversations from production, categorize them, and convert them into automated tests. Use synthetic generation to continuously expand coverage.

Neglecting cross-functional metrics. Engineering tracks technical metrics. Product tracks business metrics. Neither connects the dots. Build a unified dashboard mapping technical metrics to user experience and business outcomes so you can trace a containment rate drop back to its root cause.

FAQs

Q: What is voice AI evaluation infrastructure? It is the complete set of tools, pipelines, and processes that measure whether your voice agent works correctly in production, covering audio quality, STT accuracy, LLM response scoring, TTS quality, and end-to-end conversation flow analysis.

Q: How is voice AI testing different from chatbot testing? Chatbot testing mostly involves string comparison and keyword matching. A voice AI testing framework must handle audio signal quality, transcription accuracy across accents and noisy environments, natural-sounding speech, real-time latency, and emotional tone. Failures in any single layer cascade through the rest of the pipeline.

Q: What metrics should I track? Track across four layers: infrastructure (latency, uptime), model performance (WER, response accuracy, naturalness scores), user experience (first-call resolution, abandonment, satisfaction), and business impact (cost per interaction, containment rate, revenue). No single metric tells the full story.

Q: How many test scenarios do I need? Start with 50 scenarios covering your most common intents. Expand to 200+ by adding edge cases, accent variations, noisy environments, and adversarial inputs. Use synthetic generation to keep growing coverage. Turn every production failure into a new test case.

Q: Can I use chatbot testing tools for voice AI? Only for the text/LLM layer. Audio quality issues, latency spikes, pronunciation errors, tone mismatches, and accent-related STT failures are invisible to text-only tools. You need audio-native evaluation alongside text-based checks.

Q: How does Future AGI help with voice AI evaluation? Future AGI runs proprietary models directly on the audio waveform, catching problems transcript-only tools miss. It generates thousands of synthetic test scenarios, provides real-time monitoring with trace-level detail, flags regressions automatically, and integrates with popular voice AI frameworks and CI/CD pipelines.