Real-Time LLM Evaluation: Continuous Testing for Production AI

Master Real-Time LLM Evaluation with continuous testing for production AI. Learn advanced monitoring, evaluation metrics & AI system observability.

1. Introduction

"75% of AI projects fail to reach production" – this statistic haunts every AI team. The symptoms are familiar: sudden latency spikes crushing user experience, silent regressions discovered only when users complain about wrong outputs, and brittle evaluation methods that work in testing but crumble under real-world conditions.

Traditional benchmarks like GLUE and SuperGLUE excel at controlled testing but miss critical production issues. They can't detect data drift, adversarial inputs, or performance degradation under load. When models achieve perfect scores on these static tests, teams get a false sense of readiness.

Real-time evaluation changes this equation by monitoring essential metrics continuously:

Latency and throughput during demand spikes

Concept and data drift when inputs deviate from training patterns

Toxicity and safety scores to maintain acceptable output boundaries

This shift transforms incident response from reactive firefighting to proactive maintenance.

2. Understanding Real-Time LLM Evaluation

2.1 Traditional vs. Real-Time Evaluation Comparison

Traditional benchmarks provide snapshots but remain blind to live issues. They catch broad performance gaps but miss microbursts of failure – a 10-second slowdown or sudden spike in harmful outputs that frustrate users.

Real-time systems flag these events immediately, tying them to specific inputs. This enables teams to deploy fixes before issues scale, shifting from incident reaction to prevention.

2.2 Production Challenges

Moving LLMs to production introduces unique hurdles that static tests can't address:

Scale: Thousands of concurrent requests expose performance bottlenecks invisible in small-scale tests.

Variability: Real user inputs include emoji, slang, and typos. Models fail unpredictably when encountering unfamiliar patterns.

Expectations: Users demand near-instant responses with high reliability. Even brief delays erode trust.

Consider this: retailers lose $1.1 trillion globally from slow data responses. When your LLM hallucinates or slows down, every minute of blind operation costs money and reputation.

3. Core Components of Real-Time Evaluation Systems

3.1 Metrics Collection Layer

This foundation captures essential performance data from live traffic:

Performance Metrics: Track API latency and requests per second to identify slowdowns before user impact.

Quality Measures: Compare outputs against ground truth or use LLM-as-judge methods like G-Eval for unstructured tasks.

Safety Measures: Deploy toxicity classifiers to detect harmful or biased content in real-time.

3.2 Processing Pipeline

Raw metrics become actionable signals through intelligent processing:

Stream Processing Architecture: Tools like Apache Flink or Kafka Streams enable continuous metric collection and analysis, adapting to traffic fluctuations.

Real-Time Scoring: Use sliding windows and incremental scoring for moving averages, percentile latencies, and anomaly detection within seconds.

Alert Routing: Configure threshold-based alerts to services like PagerDuty and Slack. Example: p95 latency exceeding 500ms triggers immediate notification.

3.3 Feedback Integration

Evaluation completes when insights drive system improvements:

User Feedback Loops: Collect ratings, thumbs-up/down, and error reports to correlate technical metrics with user satisfaction.

Automated Mitigation: Deploy AI agents or rule-based scripts to filter harmful content before reaching users.

Production Data Insights: Retrain models periodically on curated real-world examples to address language evolution and edge cases.

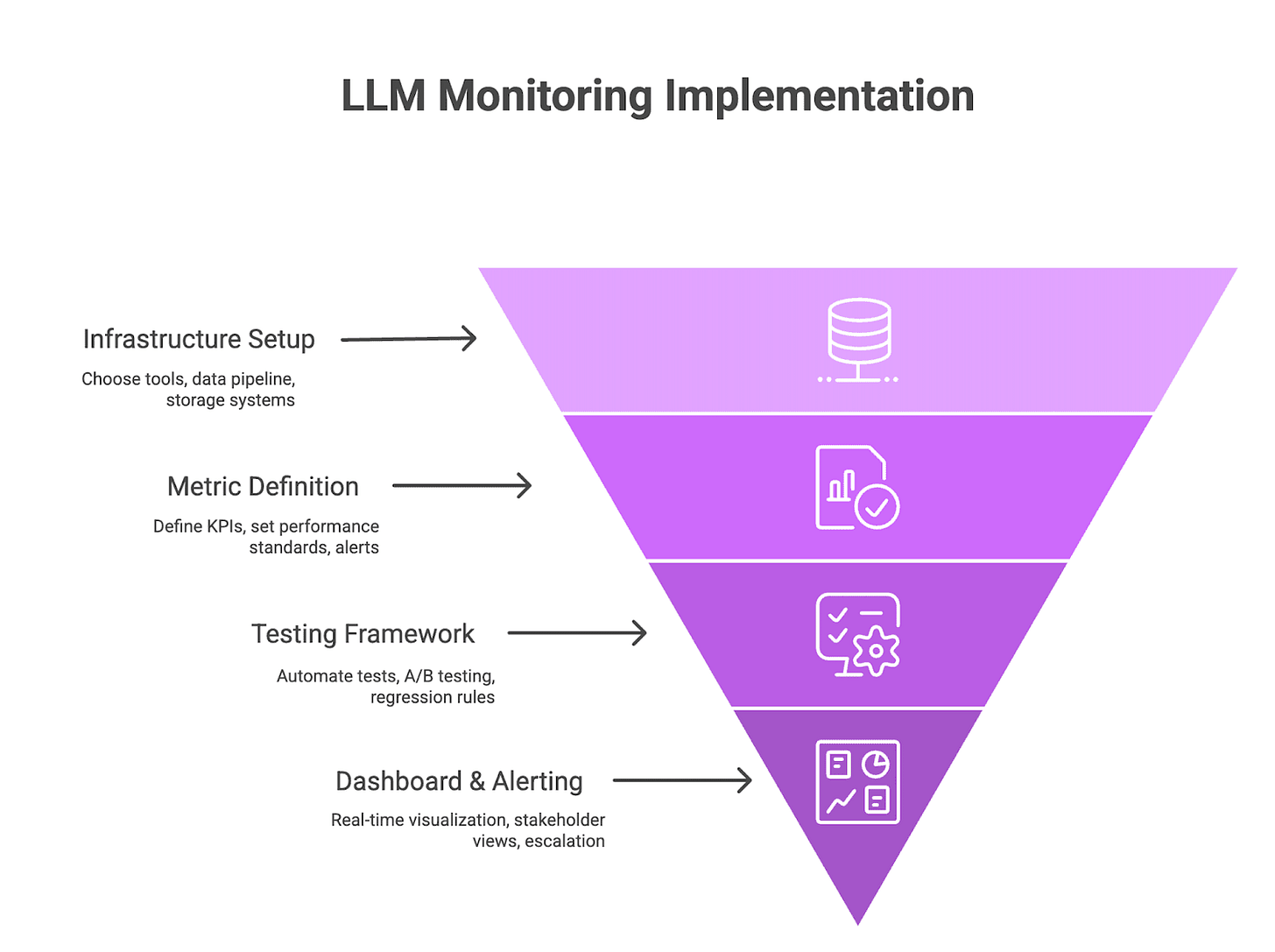

4. Step-by-Step Implementation Guide

Step 1: Infrastructure Setup

Monitoring Platform Selection: Choose tools offering end-to-end evaluation and tracing capabilities like Future AGI.

Data Pipeline Integration: Implement OpenTelemetry (OTEL) for seamless LLM request/response logging. Configure your application framework to send structured events with timestamps, model IDs, prompts, responses, and session metadata.

Storage Systems: Maintain detailed logs for 30-90 days, aggregating older data into summaries for long-term trend analysis.

Step 2: Metric Definition and Baseline Establishment

KPI Selection: Define performance metrics (p50/p95 latency, throughput), quality metrics (accuracy, relevance), and safety metrics (bias, toxicity scores).

Baseline Setting: Conduct pre-production load tests to establish normal latency and error rates. Use historical data when available to set reasonable drift and correctness thresholds.

Alert Thresholds: Configure tiered alerts (warning/critical) for different scenarios. Example: p95 latency > 500ms or error rate > 1% triggers immediate escalation.

Step 3: Testing Framework Development

Automated Test Suites: Create regression tests covering common prompts and expected outcomes. Include edge cases like typos, slang, and adversarial inputs in CI pipelines.

A/B Testing Infrastructure: Implement canary deployments with statistical methods like sequential testing to detect significant KPI changes before full rollout.

Regression Testing Protocols: Run post-deployment regression suites on synthetic and shadow traffic. Halt promotions immediately if critical tests fail or quality drops below thresholds.

Step 4: Dashboard and Alerting Setup

Real-Time Visualization: Display live charts of latency percentiles, error rates, drift scores, and user ratings with drill-down capabilities to specific prompts and responses.

Stakeholder-Specific Views: Create role-based dashboards – ML engineers monitor quality metrics while product owners view high-level uptime and satisfaction scores.

Escalation Procedures: Route alerts based on severity and expertise areas, ensuring ML teams handle model-specific issues while infrastructure teams address capacity problems.

5. Common Pitfalls and How to Avoid Them

5.1 Alert Fatigue

Problem: Excessive alerts, especially false positives, desensitize teams to real issues.

Solution: Set thresholds for significant changes only. Group related alerts into digest summaries to reduce noise.

5.2 Insufficient Baseline Data

Problem: Teams can't distinguish normal from abnormal behavior without proper baselines.

Solution: Conduct thorough pre-production load testing and monitor baseline KPIs during early production phases.

5.3 Ignoring Edge Cases

Problem: Rare inputs or usage patterns often reveal bugs missed by standard testing.

Solution: Build a library of real-world examples from production logs and integrate them into test suites.

5.4 Poor Team Communication

Problem: Disconnected data science, engineering, and operations teams slow incident response.

Solution: Establish regular syncs, shared runbooks, and role-based alert routing with clear handoff procedures.

6. Advanced Techniques and Future Considerations

Modern evaluation systems evolve beyond static thresholds toward intelligent anomaly detection and failure prediction:

6.1 AI-Driven Anomaly Detection

Machine learning models learn normal request patterns and automatically flag deviations, reducing false alerts while adapting to changing conditions without manual rule updates.

6.2 Predictive Evaluation Models

Teams train models to forecast quality drops or latency spikes based on upstream signals like data drift or token distributions, enabling proactive resource allocation before SLA impact.

6.3 CI/CD Pipeline Integration

Embedding LLM evaluation in CI/CD ensures every code or prompt update undergoes automated testing and shadow traffic validation, catching regressions early through quality gates.

6.4 Emerging Trends

Watch for self-monitoring LLMs that attach confidence scores to responses, federated evaluation across edge and cloud environments, and multimodal monitoring for audio/image/text inputs.

Conclusion

Real-time LLM evaluation transforms one-time checks into continuous safety nets. By monitoring latency, accuracy, and toxicity proactively, teams reduce mean time to resolution and downtime by up to 60%.

Implementation Timeline:

Weeks 1-2: Pilot setup with monitoring tools, data pipelines, and baseline KPIs.

Weeks 3-6: Deploy automated test suites, regression checks, and real-time dashboards.

Month 3: Full production with predictive evaluation, anomaly scoring, and user feedback loops.

The combination of metrics collection, stream processing, and feedback loops creates adaptive evaluation systems that handle data drift and evolving user needs. Advanced teams leverage AI-powered anomaly detection and predictive models to anticipate quality degradation before SLA breaches.

Schedule a demo to see how Future AGI can monitor your models in production within 24 hours, or start your free trial and integrate real-time evaluation into your existing pipeline today.

Cool headline here. What’s the biggest thing teams underestimate when testing models in production?