Choosing an LLM API? Here's What 11 Providers Won't Tell You

Discover how 11 LLM APIs stack up in 2025 by comparing token pricing, latency, and context windows, so you can choose the perfect provider for your large language model project.

Introduction

The LLM API landscape has evolved dramatically. OpenAI’s GPT-4.1 reduced pricing by 26% while extending context to 1 million tokens. Claude Opus 4 enables 7-hour coding sessions at $15 per million tokens. Cohere offers free prototyping, and Google’s Vertex AI scales with consumption-based pricing.

With prices ranging from $0.40 to $15 per million input tokens and context windows expanding from 128K to 1M tokens, understanding the technical tradeoffs is essential.

This guide examines 11 major LLM API platforms to help you select the right provider for your technical requirements.

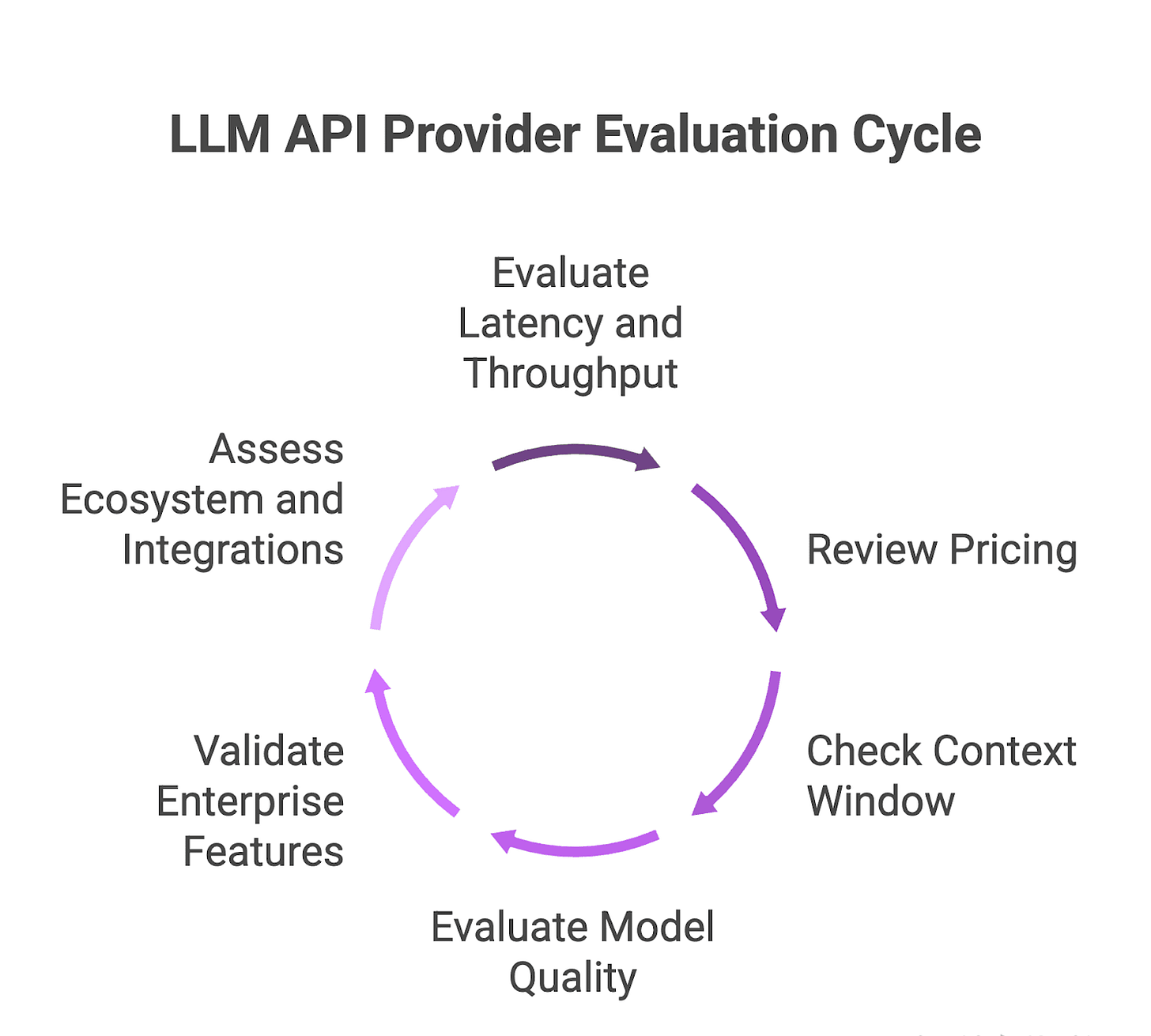

How to Evaluate an LLM API Provider

Six key factors determine provider suitability:

Latency and throughput: Measure time-to-first-token and tokens-per-second. Leading systems achieve sub-0.5 second first token and exceed 1,000 TPS.

Pricing: Compare input/output token costs and billing models (flat tiers vs. pay-as-you-go). Costs range from $0.10 to $40 per million tokens depending on the operation.

Context window: Verify maximum tokens per request. Standard ranges are 32K-128K, with advanced models reaching 1M+.

Model quality: Evaluate benchmarks for reasoning, coding, summarization, and fact-checking. Performance varies significantly across specialized tasks.

Enterprise features: Check SLAs, compliance certifications (ISO, SOC, HIPAA), and dedicated infrastructure options for security and uptime requirements.

Ecosystem & integrations: Assess SDK quality, MCP support, and plugin compatibility for seamless integration.

1. OpenAI

OpenAI’s API powers ChatGPT and enterprise solutions through diverse model variants. The Chat Completions and Assistants endpoints support text, image, audio, and code tasks.

Models

GPT-4o: Multimodal model supporting text, visual, and audio inputs for composition, summarization, translation, image analysis, and audio transcription.

GPT-4.1: 1M token context window, 40% more efficient, 80% lower cost per query than GPT-4o. Significant improvements in coding, instruction adherence, and long-context tasks.

GPT-4.5 mini: Budget-friendly variant at $0.15/1M input tokens, $0.60/1M output tokens. Achieves 82% on MMLU benchmarks.

Technical strengths

Reasoning: GPT-4o excels on MMLU language understanding tests and matches GPT-4 Turbo in reasoning benchmarks.

Coding: GPT-4.1 scores 54.6% on SWE-bench verified coding benchmark, reflecting 21.4% improvement over GPT-4o.

Multimodal: Single API call handles mixed text, image, and audio inputs.

Pricing

GPT-4o (8K): $10/1M prompt tokens, $30/1M sampled tokens. Scales to $60/$120 at 128K context.

GPT-4.1: $10/1M input, $30/1M output at 128K. Mini and Nano versions reduce input costs to $0.12–$0.80/1M.

GPT-4.5 mini: New developers receive $18 free credits.

2. Anthropic

Anthropic’s API provides access to Claude models for conversation, coding, and agentic tasks. Features include code execution, configurable thinking budgets, and tool usage. Available via direct endpoints, Amazon Bedrock, and Google Cloud Vertex AI.

Models

Claude Opus 4: Scores 72.5% on SWE-bench coding assessment. Maintains context for tasks lasting up to 7 consecutive hours.

Claude Sonnet 4: Enhanced reasoning and coding at reduced cost with faster response times and lower token consumption.

Technical strengths

Extended sessions: Opus 4 maintains context for thousands of steps, enabling multi-hour refactoring or research workflows.

Safety guardrails: Pre-deployment safety evaluations according to AI Safety Level 2 (Sonnet 4) or Level 3 (Opus 4) to reduce risky outputs.

Autonomous agents: Opus 4 optimized for agent workflows with native tool integration and continuous decision-making.

Pricing

Claude Opus 4: $15/1M input tokens, $75/1M output tokens (up to 90% savings with prompt caching and batch processing).

3. Gemini

Google’s Gemini API offers advanced multimodality, ultra-long context windows, and integration with search and cloud ecosystems. Three preview models serve different requirements: 2.5 Pro (deep reasoning), 2.5 Flash (speed), 2.0 Flash-Lite (cost savings). Native support for text, audio, images, and video with optional Google Search grounding.

Models

Gemini 2.5 Pro: 1M token window (2M coming), optimal for processing extensive texts, codebases, or multimedia transcripts.

Gemini 2.5 Flash: Professional-level reasoning with sub-second latency. Includes text-to-speech support.

Gemini 2.0 Flash-Lite: Cost-effective option matching 1.5 Flash quality at equivalent pricing for high-volume tasks.

Technical strengths

Native multimodality: Handles text, voice, images, and video in single API requests.

Ultra-long contexts: 1M tokens—significantly above standard 32K—allows input of entire books, logs, or code repositories.

Web-scale retrieval: Google Search grounding provides inline citations and current information, reducing hallucinations.

Pricing

Gemini 2.5 Pro Preview: Free up to 1,500 requests daily; then $35/1,000 requests. Token pricing: $1.25–$2.50/1M prompt tokens, $10–$15/1M output tokens.

Gemini 2.5 Flash Preview: $0.60/1M output tokens (standard), $3.50/1M with chain-of-thought mechanism.

4. Microsoft (Azure OpenAI Service)

Azure OpenAI Service integrates OpenAI’s models with Azure data, security, and analytics capabilities. Same GPT-4o endpoints with additional constraints: virtual network isolation, regional data zones, and compliance controls. Pay-as-you-go billing and Provisioned Throughput Units (PTUs) ensure consistent performance.

Models

GPT-4o (multimodal): 128K-token context (1M approaching) with vision, voice, and text capabilities on accelerated hardware.

GPT-4o mini (budget multimodal): Up to 50% lower pricing than standard GPT-4o while preserving audio input and vision.

Technical strengths

Enterprise security: Azure compliance framework (ISO, SOC, HIPAA), private endpoints, and role-based access controls.

SLA-backed uptime: 99.9% latency SLA for token generation.

Regional data residency: 27 global Azure regions with specialized Data Zones in US and EU.

Pricing

OpenAI-aligned billing: $5/1M input, $20/1M output for GPT-4o; $0.60/1M input, $2.40/1M output for GPT-4o mini (varies by region).

Committed use discounts: Up to 50% savings through hourly PTU reservations.

5. Amazon (Bedrock)

Amazon Bedrock provides a unified, fully managed API for foundation models from multiple AI vendors. Features include model fine-tuning, RAG pipelines using Knowledge Bases, and autonomous agent orchestration with AWS security controls.

Models

Bedrock provides access to:

Anthropic Claude series (3 Opus, Sonnet)

Cohere Command models

Mistral AI (Medium, Large)

AI21 Labs Jurassic-2 and Studio

Meta Llama 3 (up to 70B)

Amazon Titan (Text, Embeddings)

Technical strengths

Serverless inference: Automatic scaling for traffic spikes via InvokeModel API with per-token pricing.

Built-in RAG & agents: Knowledge Bases anchor outputs in proprietary data. Agents link calls across FMs, APIs, and databases.

Consolidated billing: Unified invoicing across all supported models eliminates individual vendor setups.

Pricing

On-Demand: Per token at established rates. Batch-mode inference at 50% reduction.

Provisioned Throughput: Reserve model units (e.g., Claude Instant at $39.60/hour) for high-volume applications with up to 50% batch-mode discounts.

6. Cohere (Command)

Cohere’s Command API specializes in retrieval and tool utilization with extensive contexts and fine-tuning options. Powers RAG pipelines, chatbots, and linguistic tools across multiple languages.

Models

Command R (128K context): Optimized for retrieval-augmented generation and tool use. 128K input tokens per call.

Command R7B (efficient edge): 7B-parameter model for on-device or edge inference with low-latency, cost-effective implementation.

Command A (256K context, enterprise): 256K-token window for complex agentic activities with superior multilingual capabilities.

Technical strengths

RAG & tool optimization: Excellent performance on retrieval-augmented tasks with seamless external API integration.

Multilingual: Handles 10+ major languages with consistent latency and accuracy.

High throughput: Achieves over 500 tokens per second on optimal hardware for real-time document search and summarization.

Pricing

Command R: $0.15/1M input tokens, $0.60/1M output tokens.

Command R7B: $0.0375/1M input tokens, $0.15/1M output tokens.

Fine-tuning: Starting at $3/1M tokens during training.

7. Mistral

Mistral AI provides open-weight models through its public API without license fees or vendor lock-in. Supports chatbots, code assistants, and research tools requiring superior text and coding performance.

Models

Mistral 7B: 7.3B parameters outperforming larger 13B and 34B models on language benchmarks. Uses Grouped-Query Attention for sub-second inference.

Codestral Embed: Specialized embedding model for code retrieval, outperforming OpenAI’s embeddings.

Mistral Medium 3: Performance equivalent to larger models at one-eighth the cost. 4096×32 sliding-window context exceeding 131K tokens.

Technical strengths

Open-source performance: Apache 2.0-licensed models match or surpass proprietary alternatives for research and production.

Sliding Windows and Grouped Query: Improves inference speed and extends context to 131K tokens with minimal memory cost.

Apache 2.0 license: Unlimited commercial use, modification, and distribution without legal constraints.

Pricing

Mistral 7B: $0.25/1M input and output tokens (API or free self-hosting).

Mistral Medium 3: $0.40/1M input, $2.00/1M output (free self-hosting option).

Complete self-hosting: Apache 2.0 allows local deployment with only infrastructure costs.

8. Together AI

Together AI provides serverless execution for 200+ open-source and partner LLMs through a unified API backed by scalable GPU clusters. Pay-per-token billing and self-service GPU rentals optimize cost, performance, and capacity.

Models

Llama 4 Maverick: 400B parameters with 1M token context for extensive conversation, summarization, and coding.

Llama 4 Scout: 240B-parameter variant at $0.18/1M input, $0.59/1M output for development and testing.

Llama 3.x series: Lite/Turbo/Reference tiers (up to 70B) balancing speed and quality.

DeepSeek-R1-0528: Open-source reasoning model with 23K-token thinking, 87.5% on AIME benchmark at $7/1M tokens.

Qwen 2.5-7B-Instruct-Turbo: 7B conversation model with 131K-token context at $0.30/1M input, $0.80/1M output.

FLUX Tools: Image-generation models charged per megapixel and per step.

Technical strengths

Rapid prototyping: Instant serverless endpoints and extensive code samples enable quick development cycles.

Open-source repository: 200+ models covering conversation, code, vision, and embeddings for flexible FM combinations.

Pricing

Llama 4 Maverick: $0.27/1M input, $0.85/1M output via API.

Qwen 2.5: $0.30/1M input, $0.80/1M output.

GPU clusters: On-demand H100 SXM at $1.75/hour; reserved capacity for large-scale projects.

9. Fireworks AI

Fireworks AI provides serverless inference executing optimized open models with exceptional speed, eliminating GPU management. SOC 2 Type II and HIPAA compliant with multi-cloud GPU orchestration across 15+ locations.

Models

DeepSeek R1: 0528 update enhances reasoning precision with vision inlining for document-level understanding.

Llama 4 Maverick: Meta’s 400B-parameter variant with 1M token window optimized for sub-second latency.

Gemma 3 27B: Google’s 27B instruct-tuned model with multimodal support and 128K context for low-latency inference.

Technical strengths

FireAttention inference engine: Custom CUDA kernel stack providing up to 12× accelerated long-context inference and 4× performance improvements over vLLM using FP16/FP8 optimization.

Multimodal support: Integrates text, graphics, and audio in single API for voice bots, visual assistants, and composite AI agents.

Compliance: SOC 2 and HIPAA compliant with audit logs and VPC isolation on AWS and GCP.

Global GPU orchestration: Automatically distributes GPUs across 10+ cloud platforms and 15+ locations for high availability.

Pricing

Image generation: $0.00013 per denoising step (~$0.0039 for 30-step SDXL image). FLUX models: $0.0005–$0.00035 per step.

Embeddings: $0.008/1M input tokens (≤150M parameters); $0.016+ for larger models.

Free credits: $1 for new accounts.

10. Hugging Face (Self-Hosted)

Hugging Face enables execution of open-source models on your infrastructure with complete control over performance, data privacy, and costs. Deploy Transformers, Diffusers, and Sentence-Transformers behind private VPC endpoints or on-prem clusters.

Models

Over 60,000 Transformers, Diffusers, and Sentence-Transformers: Choose from 1.7M models on the Hub, including Stable Diffusion, Whisper, BERT, and GPT variants for self-hosted deployment.

Technical strengths

Complete control: Choose hardware, OS, and network configuration under Apache 2.0 or permissive licenses. Modify or fork any model.

Custom tools: Integrate proprietary monitoring, scaling algorithms, or private VPC endpoints with unified huggingface_hub SDK.

Unified Client SDK: Same Python or JavaScript client for cloud endpoints and local deployments—no code changes between testing and production.

Pricing

Inference Endpoints: Serverless free tier for testing. Managed infrastructure starts at $0.033/CPU-core, $0.50/GPU per hour (paid per minute).

Self-hosting: Free model execution on your servers—only infrastructure costs apply. Kubernetes or other orchestration for automated scaling.

11. Replicate

Replicate offers unified API access to 1,000+ community and proprietary ML models including Claude, DeepSeek, Flux, and Llama without server management. Swap models with single-line code changes.

Models

Community and proprietary models: Access Claude (Anthropic), DeepSeek R1, Flux image/audio tools, Llama variants, Veo, Ideogram from single interface.

Model transition: Change from CPU-based public model to A100-powered proprietary model by modifying model reference string.

Technical strengths

Flexible billing: Public models charge GPU time ($0.000225/sec on T4 to $0.00115/sec on A100). Claude bills per token ($3 input/$15 output per million).

Hardware selection: Choose CPU, GPU type, or TPU. Replicate automatically scales and charges only for resources and duration used.

Auto-scaling: Clusters activate on demand and deactivate when work completes—no fees for unused capacity.

Pricing

GPU billing: T4 at $0.000225/sec; A100 at $0.00115/sec with per-second invoicing.

Claude-3.7-Sonnet: $3/1M input tokens, $15/1M output tokens. Flux-1.1-Pro charged on input/output ratios.

Free credits: $10 for new clients.

Best-Fit Use Cases

Startups & SMBs: Together AI (pay-as-you-go with 200+ permissively licensed models) and Mistral (Apache 2.0-licensed models with zero licensing costs).

Enterprises: Azure OpenAI Service (99.9% uptime SLA, ISO/SOC/HIPAA compliance) and Amazon Bedrock (unified SLA with integrated security).

Multimodal: GPT-4o (native text/audio/image processing in single API) and Fireworks AI (FireAttention engine for optimized multimodal inference).

Research and fine-tuning: Cohere (fine-tuning API for domain-specific applications) and Hugging Face (60,000+ open-source models on private infrastructure).

Emerging Trends & What’s Next

Three major developments are reshaping the LLM API landscape:

New competitors: China’s DeepSeek now competes with GPT-4.1 through its R1-0528 update (enhanced reasoning, reduced hallucinations). Elon Musk’s xAI prepares Grok 3.5 API for extensive testing. Perplexity introduces pplx-API for grounded, search-based responses.

Ultra-long context: GPT-4.1 and Gemini 2.5 Pro process up to 1M tokens per request, enabling comprehensive book summaries and extensive code repository analysis.

On-device and federated inference: Lightweight LLMs operate locally on smartphones and private networks, securely transmitting updates without central servers. Enables real-time AI for offline assistants and decentralized enterprise operations.

Conclusion

Selecting an LLM API requires balancing context capacity, cost, and speed. Ultra-fast endpoints like Gemini 2.5 Flash respond in seconds at higher per-token costs. Open-source solutions like Mistral or Together AI offer lower costs but may require customization for latency-sensitive operations.

Recommended approach: Pilot first. Run A/B tests between two providers using identical prompts to evaluate real-world latency, token usage, and output quality. This identifies which API best fits your requirements and reveals hidden tradeoffs—whether an API maintains quality under high demand or develops hallucinations on extended contexts.

Try Future AGI’s evaluation platform to compare all 11 providers side-by-side.

FAQs

What’s an LLM API?

An LLM API is a service interface that enables programs to transmit text prompts to a large language model and obtain produced answers, integrating NLP functionalities—such as conversation, summarization, or code completion—into software through simple HTTP requests.

Can I switch providers mid-project?

Indeed, one may conceal provider-specific calls behind an abstraction layer or middleware (such as LangChain, LiteLLM, or a bespoke SDK) to speed up the interchange of models or suppliers without the need of rewriting fundamental functionality.

How do I benchmark cost vs. performance?

Use a simple formula—cost per 1 million tokens divided by attained throughput (tokens per second) and resources such as the Future AGI’s experiment feature to run parallel tests that log live latency and per-token rates

Are open-source models production-ready?

You should do pilot testing and establish monitoring to detect drift or quality concerns, even if several open-source LLMs (such as Mistral 7B and Llama 3.x) provide almost proprietary performance and are now powering RAG and chatbot systems in production.

Good comparison guide. One thing that doesn't show up in these tables -- quantisation levels. Several budget providers serve quantised versions of models without disclosing it, so the benchmark numbers you're comparing against don't match what you're actually getting. The 6x price gap between Claude and Kimi K2.5 looks wild until you account for what each is actually serving: https://sulat.com/p/the-real-cost-of-cheap-ai-inference

VERY useful analysis/ breakdown.